Time is arguably the most important commodity for any software development team. Pressure from higher up in organizations means the latest software releases are expected yesterday. Software development teams, therefore, face a huge challenge when moving through various phases of development to complete each phase in as little time as possible.

During the testing phase, teams can schedule tests to better manage their time. Scheduling tests simply means using existing resources to execute and repeat certain software tests automatically at regular intervals or at specific future points. Scheduling reduces the need for human interaction with the testing suite, which means less chance of error and a time saver for development teams.

The rest of this post deals with some important scheduling test metrics in addition to highlighting how to optimize those metrics for more efficient scheduling and better usage of both team resources and time.

Scheduling and Automation

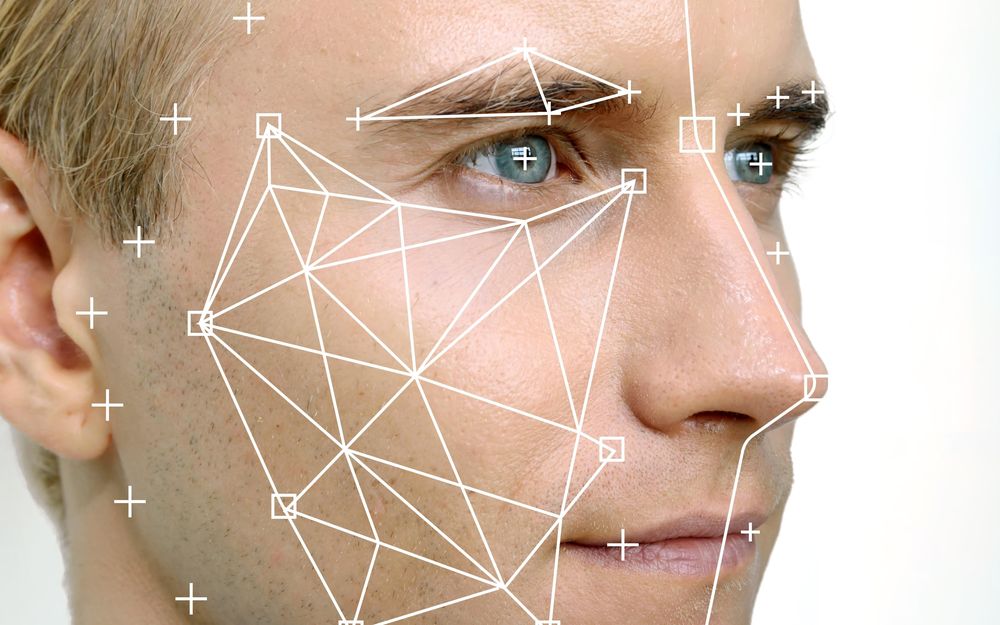

Scheduling can be regarded as a subsystem of the test automation process because scheduling a test means to automatically execute it at some future time. A scheduling tool fits into the automation system as per the below diagram:

Schedule Slippage, Schedule Variance As Test Metrics

Schedule Slippage

Schedule slippage is a project metric that can also be applied to testing. As one of the scheduling test metrics, schedule slippage measures the amount of time a single test or a test suite has been delayed from its original baseline schedule. The following formula can be used to calculate schedule slippage (more details):

Schedule Slippage = (Actual Project Duration – Planned Project Duration / Planned Project Duration) * 100

Automated scheduled tests might get delayed for several reasons, including resources for testing being unavailable at the time of testing, and issues with the testing system itself such that scheduled tests fail to execute on time. Perhaps the testing team finds an issue with certain tests, which delays the whole testing process.

Teams can measure slipping across projects to determine whether their scheduling is actually saving time in addition to highlighting problematic tests or test suites that need improvement.

Schedule Variance

Schedule variance measures as a percentage the difference between the actual end date for a project or task and the planned end date. The formula for schedule variance is as follows:

(Actual No. of Days -Estimated No. of Days) / (Estimated No. of Days) * 100

This metric gives a single summary figure for how long a testing effort took compared to the plan. By calculating the schedule variance for automated tests alone, you can get a measure of how effective your scheduling efforts are.

Consider the following automated testing effort:

Estimated days for completion: 10

Actual testing days: 14

Schedule variance = ((14-10)/10)*100 = 40%

Ideally, the variance should be as close to zero as possible. A negative variance in the context of testing means that the testing has been performed quicker than planned, which is also a good sign.

A positive variance can be a problem. The following thresholds can act as a helpful guideline for determining the severity of a positive shcedule variance for your tests.

Reducing Schedule Slippage

For any testing effort, it’s important to reduce schedule slippage to ensure the test suites get completed and executed within a certain reasonable time frame. It is a good idea to define this reasonable time frame using the below conventions:

- Non-Risk Adjusted (NRA) Date: The estimated test completion date assuming ideal conditions.

- Risk Adjusted (RA) Date: The estimated test completion date assuming the testing team encounters some issues along the way that need fixing.

Testing efforts that take longer than the RA Date need to be evaluated thoroughly to find out what went wrong. Not every project’s tests will finish by the NRA Date by virtue of the fact that almost every testing team encounters issues. The point is that to reduce schedule slippage, steps should be taken to complete all future testing efforts by the RA Date.

The process of scheduling tests can reduce schedule slippage by improving the automation and speed of the testing effort. Simple human errors that result in lost time, such as forgetting to execute certain automated tests, can be avoided by scheduling those tests to complete. Ensuring the resources are available for the scheduled tests at their planned execution date can further reduce test metrics such as test slippage.

Closing Thoughts

- Test scheduling is a time-saver for the testing team, and helps improve the overall testing process.

- Use the schedule slippage and schedule variance test metrics to evaluate whether your team’s testing efforts are completing within a reasonable time.

- No test metric is perfect though. Sealights continuous testing platform provides a unified dashboard across all types of test that gives a holistic view of software testing and quality.

I love marketing and management, I love creativity and innovations, I love friendship and faithfulness, I love ballet and contemporary, startup Wandelion.com where I work + keeping up to date on emerging technologies, social media platforms and digital culture.